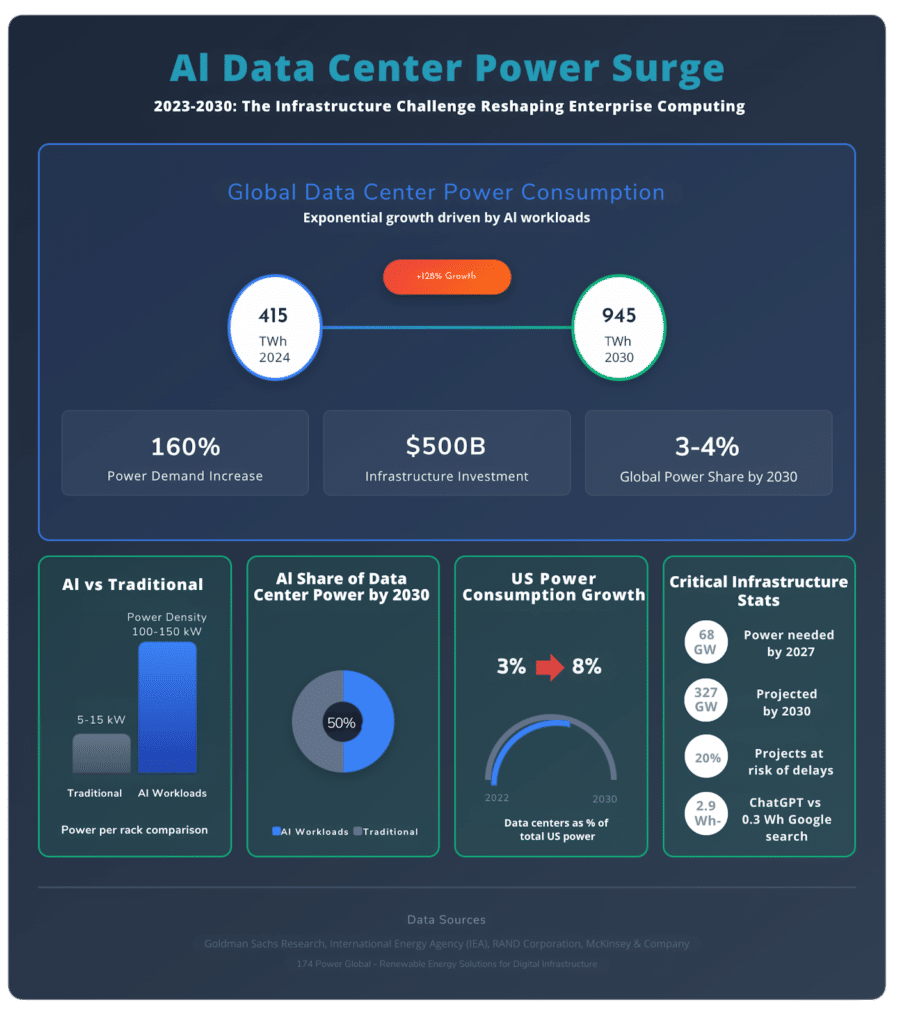

Goldman Sachs Research projects a staggering 165% increase in data center power demand by 2030, driven primarily by artificial intelligence deployment across enterprises. This exponential growth signals a fundamental shift in how organizations must approach infrastructure planning. The International Energy Agency estimates that data center electricity consumption will more than double to reach 945 terawatt-hours by 2030, with comprehensive renewable energy solutions becoming critical for sustainable AI operations.

Unlike traditional data center expansions that followed predictable growth patterns, AI workloads create unprecedented demands for power for AI data centers. These facilities require fundamentally different infrastructure approaches, from cooling systems capable of handling extreme heat densities to power delivery systems that can support continuous, high-intensity operations. Enterprise leaders who master this infrastructure transformation will gain significant competitive advantages in the AI-driven economy.

Understand the Exponential Power Demand Associated with AI Data Centers

The scale of AI’s infrastructure requirements defies conventional data center planning models. RAND Corporation research indicates that AI data centers could require 68 gigawatts of power capacity by 2027, escalating to 327 gigawatts by 2030, comparable to the entire power capacity of California today. This exponential trajectory stems from the computational intensity of machine learning operations, particularly large language model training and inference workloads.

Traditional enterprise data centers typically operated with power densities of 5-15 kilowatts per rack. Modern AI installations routinely exceed 100 kilowatts per rack, with some specialized configurations reaching 150 kilowatts or higher. McKinsey analysis shows that ten years ago, a 30-megawatt facility was considered large, while today’s AI data centers commonly require 200 megawatts or more.

The Scale of AI Infrastructure Transformation

The financial implications extend far beyond initial capital expenditure. McKinsey estimates that providing the necessary 50+ gigawatts of additional data center capacity in the United States alone will require over $500 billion in infrastructure investment. This massive deployment creates cascading effects throughout the energy ecosystem, from transmission infrastructure upgrades to renewable energy project development.

Power consumption patterns also differ dramatically from traditional workloads. AI training operations often run continuously for weeks or months, creating sustained peak demand rather than the variable loads typical of conventional applications. This consistency actually simplifies some planning aspects while intensifying the absolute power requirements.

Beyond Traditional Data Center Requirements

Enterprise AI infrastructure planning must account for unique operational characteristics that separate these facilities from conventional data centers. Latency requirements vary significantly between AI training and inference workloads, affecting geographic distribution strategies. Training operations can tolerate higher latency, enabling deployment in remote locations with abundant power, while inference workloads require proximity to end users.

The computational architecture also drives different infrastructure needs. Graphics processing units (GPUs) and specialized AI chips generate substantially more heat per unit area than traditional servers, necessitating advanced cooling solutions and redundant power delivery systems. These high-density configurations require careful capacity planning to avoid thermal throttling and performance degradation.

Strategic Capacity Planning for AI Data Centers

Effective capacity planning for AI workloads requires sophisticated forecasting models that account for rapidly evolving technology and unpredictable demand spikes. Gartner projects that by 2025, over 70% of organizations will embrace predictive analytics for infrastructure capacity planning, highlighting the critical importance of data-driven optimization approaches.

Modern AI capacity planning integrates multiple variables: model complexity, training duration, inference volume, and hardware efficiency improvements. Organizations must balance immediate deployment needs against future scalability requirements, particularly given the long lead times for major infrastructure investments. Planning horizons that once extended 3-5 years now require 7-10 year projections to accommodate the full lifecycle of AI infrastructure investments.

Implementing Predictive Capacity Models

Advanced capacity planning leverages machine learning algorithms to analyze historical usage patterns and predict future resource requirements. These models incorporate external factors such as business growth projections, AI adoption rates, and technology roadmaps to generate more accurate forecasts than traditional linear extrapolation methods.

Real-time monitoring systems provide continuous feedback loops that refine capacity models based on actual performance data. Organizations implementing these predictive approaches report 25-40% improvements in resource utilization efficiency and significantly reduced risk of capacity shortfalls during critical AI deployment phases.

Load Balancing and Resource Distribution

Geographic distribution strategies play crucial roles in optimizing both performance and costs. Training workloads can be distributed to regions with abundant renewable energy and lower power costs, while inference operations remain closer to population centers. This hybrid approach requires sophisticated orchestration systems that can dynamically allocate workloads based on real-time power availability and cost optimization.

Network infrastructure becomes increasingly critical as workloads are distributed across multiple locations. High-bandwidth, low-latency interconnects enable seamless resource sharing and load balancing between facilities, maximizing overall system efficiency while maintaining performance standards.

Power Infrastructure Optimization Strategies

Strategic optimization of power infrastructure requires comprehensive approaches that address both immediate operational needs and long-term sustainability goals. Successful AI enterprises implement multi-layered strategies that combine technological innovation with operational excellence.

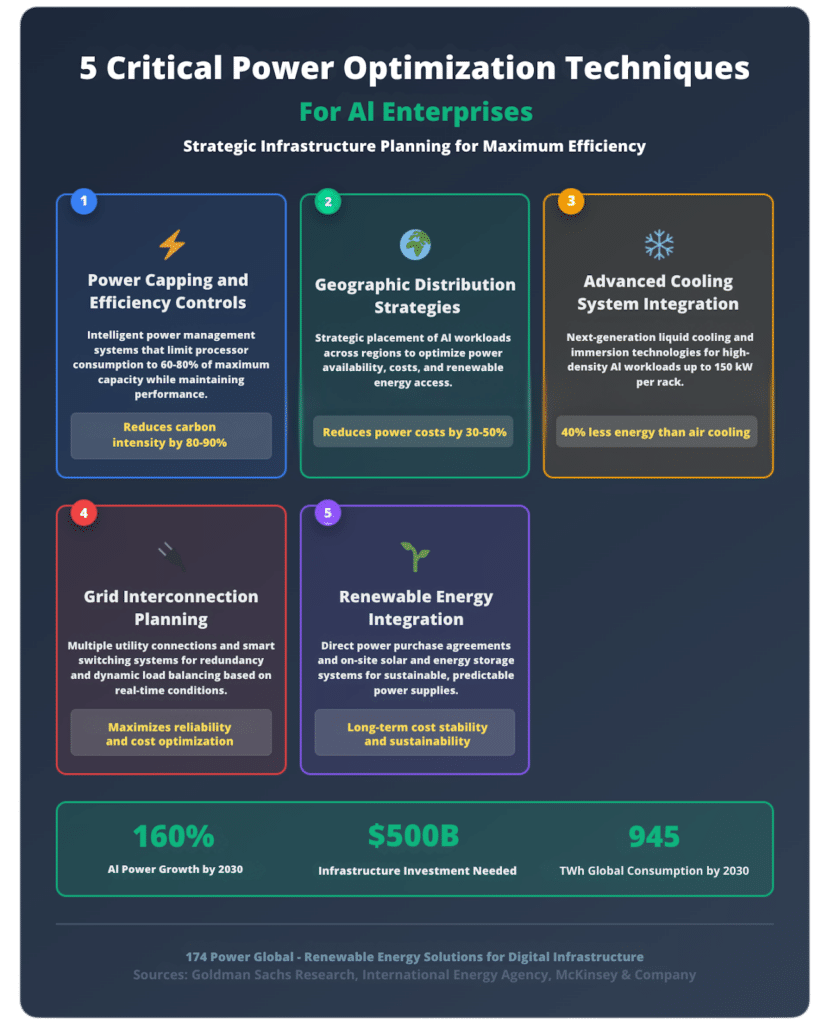

5 Critical Power Optimization Techniques for AI Enterprises

- Power Capping and Efficiency Controls: MIT research demonstrates that limiting processor power to 60-80% of maximum capacity can reduce carbon intensity by 80-90% while maintaining acceptable performance levels. Advanced power management systems automatically adjust consumption based on workload priority and grid conditions, enabling more efficient resource utilization during peak demand periods.

- Geographic Distribution Strategies: Distributing AI workloads across multiple regions optimizes both power availability and costs. Remote locations with abundant renewable energy resources provide cost-effective options for training operations, while urban facilities handle latency-sensitive inference workloads. This geographic optimization can reduce overall power costs by 30-50% compared to centralized approaches.

- Advanced Cooling System Integration: Next-generation cooling technologies dramatically improve power efficiency ratios. Direct-to-chip liquid cooling systems handle power densities up to 120 kilowatts per rack while consuming 40% less energy than traditional air cooling. Immersion cooling solutions support even higher densities, enabling more compact facility designs and reduced overall power consumption.

- Grid Interconnection Planning: Strategic grid connections optimize power sourcing and improve reliability. Multiple utility connections provide redundancy and enable dynamic load balancing based on real-time power costs and availability. Smart interconnection systems automatically switch between power sources to minimize costs and environmental impact.

- Renewable Energy Integration: Direct power purchase agreements with renewable energy providers ensure sustainable, cost-predictable power supplies. On-site solar and energy storage systems provide additional resilience and can significantly reduce long-term operating costs while meeting corporate sustainability commitments.

Advanced Cooling Solutions for High-Density AI Workloads

Modern AI workloads generate heat densities that overwhelm traditional data center cooling systems. Liquid cooling technologies have evolved from experimental solutions to production necessities for high-performance AI deployments. These systems circulate coolant directly to processors, capturing heat at the source rather than attempting to remove it from ambient air.

Immersion cooling represents the next evolution, submerging entire servers in dielectric fluids that directly absorb heat from all components. While implementation requires significant infrastructure changes, immersion systems can handle power densities exceeding 200 kilowatts per rack while actually reducing total facility energy consumption.

Location Strategy: Balancing Power Availability and Performance

Site selection for AI infrastructure requires balancing multiple competing factors: power availability, network connectivity, regulatory environment, and talent access. Primary markets like Northern Virginia face power constraints, driving expansion to secondary markets in Indiana, Iowa, and Wyoming, where power remains abundant.

However, remote locations present trade-offs in network connectivity and operational complexity. Successful site strategies often employ hub-and-spoke models, with major facilities in traditional markets handling inference and customer-facing operations while remote sites focus on training and batch processing workloads.

Future-Proofing Your AI Infrastructure Investment

Investment decisions made today will determine competitive positioning throughout the AI transformation. Organizations must anticipate technological evolution while maintaining flexibility for rapid adaptation to new requirements and opportunities.

The technology roadmap for AI infrastructure continues accelerating, with new processor architectures, cooling technologies, and power delivery systems emerging regularly. Planning frameworks must accommodate uncertainty while ensuring adequate capacity for anticipated growth scenarios.

Emerging Technologies and Infrastructure Evolution

Next-generation AI processors promise substantial efficiency improvements, potentially reducing power requirements per unit of computational output by 50% or more over the next five years. However, increasing model complexity and deployment scale may offset these gains, requiring continued infrastructure expansion despite technological advances.

Quantum computing integration represents a longer-term consideration that could dramatically alter infrastructure requirements. While practical quantum AI applications remain years away, infrastructure designs should consider eventual hybrid classical-quantum architectures that may require specialized power and cooling systems.

ROI Optimization and Investment Planning

Infrastructure investments for AI require careful financial modeling that accounts for rapid technological change and evolving business requirements. Traditional depreciation schedules may not align with actual equipment lifecycles in the fast-moving AI sector, necessitating more aggressive replacement planning.

Modular infrastructure designs provide flexibility to upgrade components incrementally rather than requiring complete facility replacements. This approach reduces capital risk while enabling organizations to incorporate new technologies as they become available and proven.

Building a Comprehensive AI Infrastructure Strategy

Successful AI infrastructure strategies integrate technical requirements with business objectives, regulatory compliance, and sustainability commitments. Organizations must develop comprehensive roadmaps that address both immediate deployment needs and long-term strategic positioning.

Vendor partnerships become crucial for managing the complexity of modern AI infrastructure. No single provider can deliver all necessary components, requiring careful ecosystem management and integration planning. Strategic partnerships with renewable energy developers ensure sustainable power supplies while reducing long-term operational costs.

Implementation Framework and Best Practices

Phased implementation approaches reduce risk while enabling organizations to learn and adapt their strategies based on operational experience. Initial deployments should focus on well-understood workloads with clear business cases, gradually expanding to more experimental applications as expertise develops.

Change management becomes critical as AI infrastructure demands new skills and operational procedures from IT teams. Investment in training and process development ensures organizations can effectively operate and optimize their AI infrastructure investments.

Performance Monitoring and Continuous Optimization

Real-time monitoring systems provide visibility into infrastructure performance and utilization patterns. Advanced analytics identify optimization opportunities and predict maintenance requirements, reducing downtime and maximizing return on infrastructure investments.

Continuous optimization processes ensure infrastructure evolves with changing requirements and technological advances. Regular assessments of power usage effectiveness, thermal management, and workload distribution identify areas for improvement and cost reduction.

Planning and Securing Your AI Infrastructure

Enterprise success in the AI era depends fundamentally on infrastructure planning that anticipates and accommodates exponential growth in computational and power requirements. Organizations that develop comprehensive strategies for power for AI data centers, implement sophisticated capacity planning methodologies, and embrace continuous optimization will establish significant competitive advantages.

The transformation requires unprecedented collaboration between IT, facilities, and strategic planning teams to navigate complex technical and business challenges. Success demands both technical excellence and strategic foresight to build infrastructure that can adapt and scale with rapidly evolving AI capabilities.

As enterprises embark on this infrastructure transformation journey, partnering with experienced renewable energy and infrastructure specialists like 174 Power Global becomes essential for ensuring sustainable, cost-effective, and scalable AI operations that drive long-term business success.

Frequently Asked Questions

How much power do AI data centers actually consume compared to traditional facilities? AI data centers typically consume 5-10 times more power per rack than traditional data centers, with power densities reaching 100-150 kilowatts per rack compared to 5-15 kilowatts for conventional applications. The International Energy Agency projects that global data center electricity consumption will more than double by 2030, reaching 945 terawatt-hours annually.

What are the biggest infrastructure bottlenecks for AI deployment? Power availability represents the primary constraint, with utility grid limitations and transmission infrastructure upgrades creating delays for up to 20% of planned data center projects. Secondary bottlenecks include cooling system capacity for high-density workloads and specialized hardware supply chain constraints for AI-optimized equipment.

How can enterprises optimize capacity planning for AI workloads? Effective capacity planning requires predictive analytics that incorporate AI model complexity, training duration, and inference volume projections. Organizations should implement real-time monitoring systems, geographic load distribution strategies, and modular infrastructure designs that enable incremental scaling based on actual usage patterns.What role does renewable energy play in AI infrastructure planning? Renewable energy becomes critical for both cost management and sustainability goals, with solar and wind providing the most cost-effective power sources for large-scale AI operations. Strategic power purchase agreements and on-site renewable generation can reduce long-term operating costs by 30-50% while meeting corporate environmental commitments.